We’re No Longer Just Building AI—We’re Living With It

We’ve entered a new era of artificial intelligence—one where it’s no longer about building sophisticated models tucked away in isolated research labs or innovation centers. AI is now deeply woven into the fabric of our daily lives. It shapes what we read, how we work, and the decisions made around us—often without us even realizing it.

This transformation has been both subtle and profound. What was once the exclusive domain of data scientists—crafting machine learning models for backend systems—has evolved into something far more accessible. Today, anyone with a code editor, a low-code tool, or even just a prompt can build and deploy an AI-driven application in minutes.

We’ve democratized the creation of AI. But in doing so, we’ve also amplified the stakes.

In this new landscape, building an AI application is no longer the hard part. What’s far more complex—and critical—is managing and governing it responsibly. As AI systems proliferate across industries and use cases, we need stronger frameworks to navigate the ethical, operational, and regulatory challenges they bring.

When AI becomes this accessible, the guardrails become even more important. Yet many organizations still treat AI governance as a bolt-on—or worse, an afterthought. Principles like fairness, transparency, and accountability sound good in theory but often get lost somewhere between a design sprint and deployment and rot in an excel worksheet as just a tick-in-a-box.

Organizations can’t afford to be reactive. They must take deliberate action now—before they find themselves cleaning up after unintended consequences which could have prevented. The time for discussing principles has passed and we need is a clear, actionable path forward.

Ever since the EU AI Act came into force, I’ve been reflecting deeply on what Responsible AI truly demands from us. Having spent many years building solutions in the regulatory space for banks and financial institutions, this topic isn’t just professional—it’s personal. It’s something I care about deeply.

’ve been sketching, reworking, and challenging myself to put on paper a governance model that’s not just theoretical—but truly usable. One that speaks to the practical realities of building, deploying, and scaling AI responsibly in complex organizations.

Because the question isn’t just “How do we build AI?” It’s “How do we scope, design, deploy, and sustainably govern AI applications—end to end?”

We need a lifecycle approach—one that starts at the moment of ideation and continues through to ongoing oversight. We need shared accountability across product, tech, legal, compliance, and business teams. And we need to embed ethical guardrails not just at the point of launch, but throughout the entire journey.

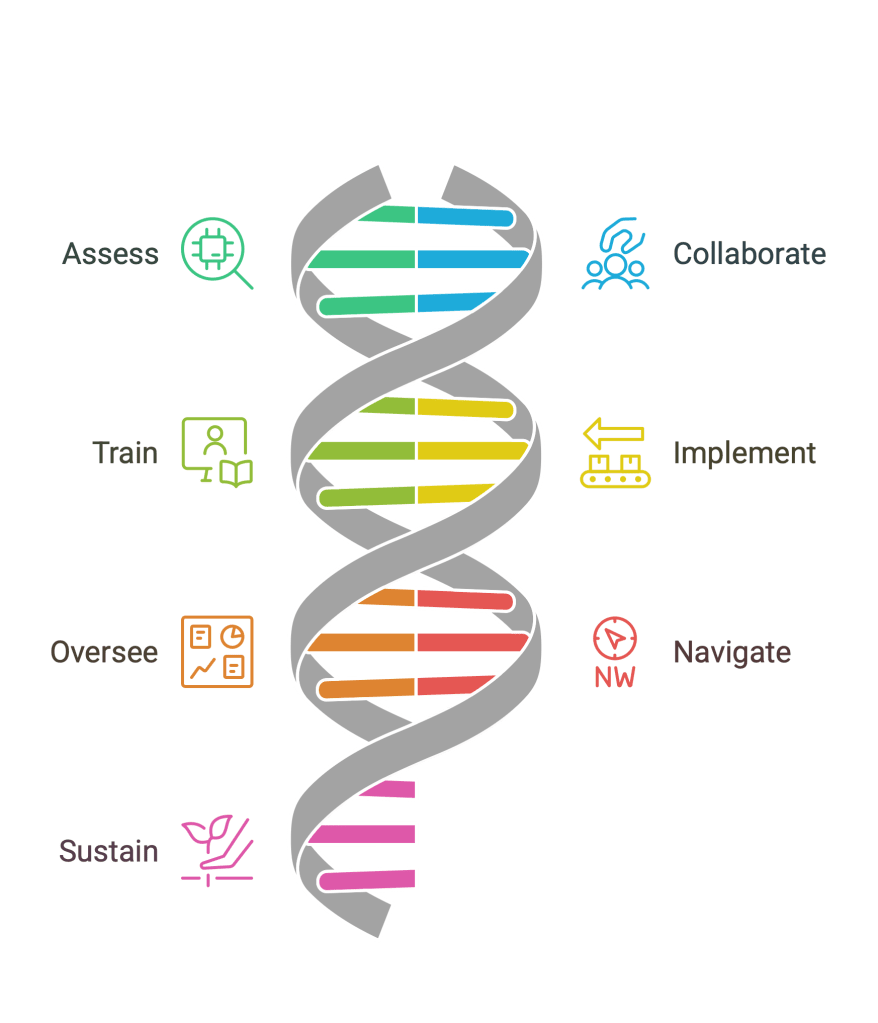

This line of thinking led me to create a simple yet comprehensive model—A.C.T.I.O.N.S. – to help teams operationalize Responsible AI from strategy to execution.

If this framework resonates with you, feel free to use it within your organization—and I’d genuinely welcome any feedback to help shape and improve it. My goal is to keep evolving this model—from a concept, to code, to a working end-to-end application that enables organizations to apply A.C.T.I.O.N.S. as a practical toolkit for managing AI applications responsibly.

One Click Deeper into A.C.T.I.O.N.S

At its core, I have tried to define A.C.T.I.O.N.S into a pragmatic approach of turning Responsible AI from abstract principles to daily operational practice. I believe that it should not be a activity outside the lifecycle of an AI application but should be embedded into the end to end development to deployment lifecycle and continue as a living feedback loop once the application is out there for people to use.

Here’s a closer look at what each action entails. A.C.T.I.O.N.S must be embedded into the DNA of an organization when it comes to managing AI. It’s not just something to be bolted on at the end; it should be woven into the very fabric of the organization—from the design principles and architecture to the onboarding process for new employees.

AI governance isn’t the responsibility of just one team or individual. To treat it as such would be the biggest mistake an organization could make. AI governance is everyone’s responsibility. It requires collaboration, shared ownership, and a collective commitment across all levels and functions of the organization

A – Assess

When we talk about Assess, it’s important to recognize that no organization is truly starting from scratch when it comes to using or building AI applications. Even if you don’t have a dedicated team of data scientists developing custom AI models, chances are you’re already leveraging AI in some form.

Whether it’s a product you’re using internally or a tool embedded into the applications your teams are already familiar with, AI is likely already playing a role. Take a moment and think about the tools your team uses daily—perhaps it’s a SaaS AI tool accessed directly through the browser like ChatGPT, Napkin.ai, or even something more specialized like PeopleGPT.

AI is already deeply integrated into your workflows, often in ways that may not be immediately obvious. The key is to assess where AI is being used, its impact, and its potential risks—both from a governance and ethical standpoint. Assessment is not a one time audit – it’s a continuous process and commitment.

C – Collaborate

The biggest shift organizations face with AI is that its impact isn’t confined to just one team. AI touches multiple functions, business streams, teams, and user personas across the entire organization. This is a transformative force that transcends boundaries.

AI governance is not the responsibility of a single team. Rather, it’s a shared responsibility that requires collaboration at all levels. From technology teams, architects, and data scientists to product managers, legal experts, ethics and compliance teams, and even HR—AI governance demands that everyone is aligned and engaged.

In short, AI Governance is a collective responsibility, and its successful governance hinges on cross-functional collaboration.

T – Train

From interns to the CEO, everyone in your organization must speak the same language when it comes to AI governance. It’s critical that all team members—regardless of role—understand the basics of AI governance, ethical risks, and regulatory obligations.

Training is essential to building awareness and reducing the likelihood of unintended misuse or non-compliance. But here’s the key: training cannot just be a box to check off. It needs to be continuous, engaging, and relevant. Gone are the days of dry PowerPoint presentations and disconnected modules. Training should be interactive, dynamic, and designed to keep people actively involved in learning, ensuring they stay informed and prepared to act in the face of evolving challenges. And it has to be updated – literally every week to keep up the pace of developments in the world of AI.

I – Implement

This is a crucial point: AI governance cannot be managed through manual, Excel-based processes. To be effective, it must be automated and integrated directly into the AI applications. The principles and policies of responsible AI must be put into practice at every stage of the AI lifecycle—no manual activity should be involved.

Ethical guidelines, checklists, and compliance steps need to be embedded into the AI development-to-deployment pipeline. This ensures that every phase, from ideation to post-deployment monitoring, adheres to governance principles seamlessly—without the risk of human error or oversight.

O – Oversee

Automated monitoring mechanisms are essential—not just for tracking model performance, but also for ensuring that AI applications remain aligned with the principles of Responsible AI over time. Any deviations from these principles must be treated as incidents and reported with a detailed explanation from the concerned teams.

This reporting should be automated and seamlessly integrated into the organization’s workflow. AI governance boardsmust receive real-time updates on these incidents, with thresholds, alerts, and a periodic review process in place to assess AI applications in production.

From changes in data schema, to model weights, and even modifications in the thresholds of AI principles, everything must be monitored, and deviations should be flagged and reported without exception. This ensures that AI systems operate within defined ethical and governance boundaries throughout their lifecycle.

N – Navigate

Did you notice that what you knew about AI last week might already be obsolete, or something new has emerged? The rapid pace of change in AI means that knowledge, tools, and best practices are constantly evolving. The same applies to AI governance models.

Governance models must be designed not just for compliance but for adaptability. Organizations need to stay agile and responsive to shifts in technical, ethical, and regulatory landscapes. What’s relevant today might be outdated in a year. Therefore, governance frameworks must be flexible enough to evolve alongside these changes, ensuring that the AI systems remain aligned with emerging trends, laws, and ethical considerations.

S – Sustain

The most important piece of the puzzle is people. And with people comes the need for a strong structure and an evolving culture. Organizations must build a culture that supports long-term accountability.

AI Governance is not a one-off project. It requires iterations, ownership, transparency, and ongoing accountability over time. To be effective, AI governance models and frameworks must be sustainable—designed for the long-term impact of AI on the organization’s people, processes, and culture.

This isn’t about simply meeting immediate regulatory requirements or ethical considerations. It’s about embedding AI governance into the organization’s DNA, where it evolves, adapts, and continues to foster responsible AI practices long into the future.

As you can see, AI governance needs Action—and with this framework, you can take structured, actionable steps that drive lasting change and ensure AI’s positive impact within your organization.

In the coming weeks, I will continue to refine this framework and work towards making it more tangible—something that you can apply directly within your own teams and processes. I look forward to your feedback and invite you to join me in evolving A.C.T.I.O.N.S. into more than just a framework. Together, we can transform it into a fully integrated, end-to-end solution that can be embedded seamlessly into the ecosystem of any organization.

Let’s collaborate and build AI governance that not only works but thrives in the real world.

A.C.T.I.O.N.S for AI Governance by Mayank Srivastava is licensed under CC BY-NC-SA 4.0

Leave a comment